Our first article (What is AI?) highlighted that Artificial intelligence already has a huge impact on our lives. People are concerned about AI replacing jobs or being misused, with good reason. So here we take a broad look at the ethics of AI.

To err is human; to really foul things up requires a computer

– Bill Vaughan

AI is software: it’s no more intrinsically good or bad than a database or website. Because AI has great power, the way we apply it is critically important. It is vital that developers understand how to address the technology’s limitations, which may not be apparent as it grows easier to use. We can learn from solving similar problems in medicine and safety-critical systems, and the AI community must adopt this good practice. Take steps to mitigate the risks of AI systems that you develop, buy or use.

As for the impact on people, there are certainly cases where AI will affect jobs, in a similar way to earlier waves of technology like the computer revolution. Businesses face the commercial reality that competitors use AI. Criminals and governments do too. Working intelligently with AI to augment human capabilities, we can cut through repetitive tasks, reduce our impact on the environment, and improve justice. AI can save lives.

This is important for Granta Innovation, because we work with businesses to understand and benefit from new technology. We highlight the risks we identify, and only take on projects with reasonable potential to bring substantial value to our customer.

We must all use AI carefully, weighing genuine costs and benefits. Working together, society and governments should plan for the changes it will bring. The six principles of ethical AI set out in this article can help. It is our job to make good from AI.

AI is software

Science fiction authors and some philosophers write of AI as a sentient being. It’s intriguing to consider future scenarios where electronic computation becomes cheap, fast and ubiquitous, and we might allow algorithms to run on this computing fabric, develop themselves, and perhaps replicate and evolve in ways we might not understand. Nick Bostrom and many others have written about some of the issues that thinking machines like this this could entail.

The reality of AI today, and in the near future, is simpler. AI is a branch of engineering and computer science centred on processing numerical data to guess outputs. The smartest AI systems – for example, Alexa, Siri and Watson – are software application platforms. Their developers built them with AI components and a great deal of data and human ingenuity.

For this reason, researchers and developers often prefer to use the term machine learning or ML, as we explained in our introduction on AI.

Alexa, is AI ethical?

Alexa, is AI ethical?

AI can use physical interfaces, like displays, microphones and loudspeakers, and today’s systems already benefit from specialist hardware, but all of what we might call intelligence happens in the software and how it processes data.

Research on developing the hardware and software that enables AI is fascinating and technically impressive. Graphcore and NVIDIA are two of many companies working on how to make machine learning faster and more powerful. Google projects like AutoML are making great progress in how to transfer learning from one field (such as recognising Internet images) to another (for example, recognising garden plants).

Even in these exciting scenarios, the AI is software, and as we highlight later, needs oversight by a human.

It’s what you do with it

A spreadsheet program can calculate a bomb trajectory, or improve crop yield. Software like AI is the same: the benefit or harm that it may produce depend entirely upon how it is used.

There is no doubt that people use AI to cause damage. For example, the cruise missiles that the United States and allies deployed in the First Gulf War performed image processing to guide them to their target. Much has been written recently about how well-funded political or foreign groups, working with a small set of companies with tools like AI, influenced large numbers of voters in Donald Trump’s election and the Brexit referendum. Criminals are now using language generators and chatbots to try to obtain people’s personal details and so break into their bank accounts or corporate systems.

Using AI for good

Many of us use AI for good reasons. Speech recognition is perhaps the most obvious, especially with the convenience of using Siri and Google Assistant on phones. Amazon is now reported to sell 10 million Alexa devices a year. We run these because they work and they make some tasks easier to do. They can be fun too.

In the right hands, AI can help protect us. Network monitoring company Darktrace turns the adaptive capabilities of AI to find hard-to-detect threats such as employees copying data, or compromised computers connecting to an attacker’s remote servers. If machine learning can improve the usability of a security system, it could make protection better still.

In medicine, we face huge challenges simply to provide an adequate level of care with limited resources. Processes from drug discovery to diagnosis and treatment can benefit from machine learning. This isn’t about replacing doctors – AI can help meet the urgent need to make healthcare professionals more productive.

Internet giants like Microsoft and Facebook are investing AI to help extend their business. But you don’t have to be a billion-dollar corporation to use AI to your own benefit. Every company and government organisation will be touched by AI in some way. Who else in your market is already applying machine learning? What could you do with it?

What to watch out for

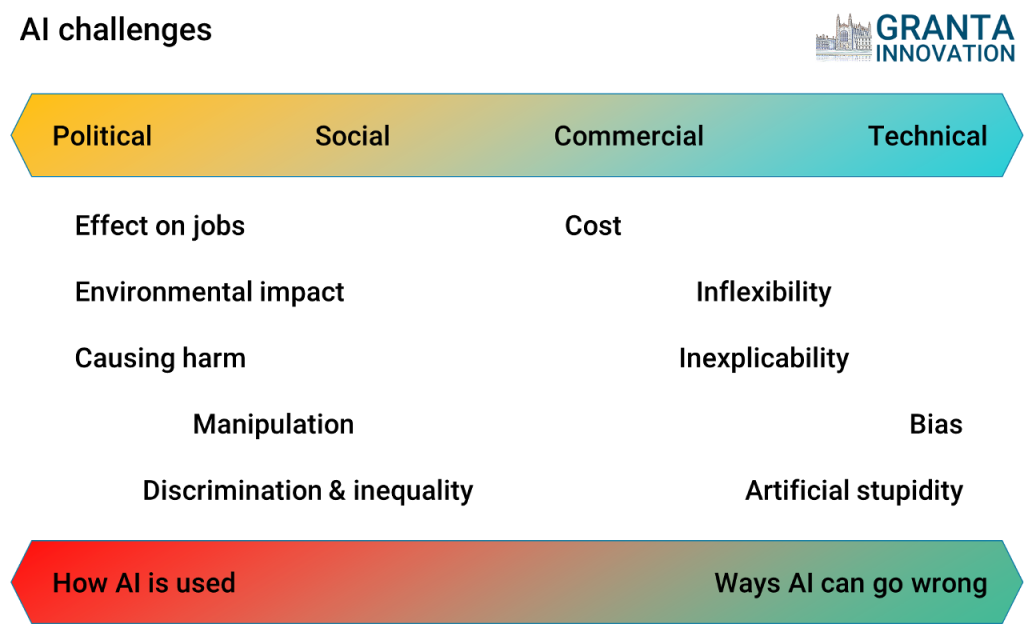

It’s up to us to use AI in ethical ways, so what are the hazards we need to be aware of? This way, we can identify and hopefully avoid the risks they might bring. The diagram below outlines some issues common to many applications of techniques like machine learning.

AI issues fall in several different dimensions, simplified in this diagram to two axes: political, social, commercial and technical; and from the use of AI, to how AI can itself malfunction.

Political and social

Governments may be most concerned about the factors highlighted on the left of this diagram. AI will certainly affect jobs, making some obsolete, changing many, and creating others, as we discussed in our Introduction to AI. AI could create environmental impacts, ranging from positive benefits such as improving industrial efficiency, to damage such as the direct energy consumption of AI systems, which are essentially powerful computers. Military, criminal and surveillance uses of AI have well-understood potential to cause harm.

Like mobile phones, AI will influence individuals and society, in ways that we do not fully understand. There will be benefits, giving us more free time and augmenting our knowledge, but challenges too. AI could help manipulate people, political systems and markets. Adoption of AI in industrial, education, insurance and medical systems might worsen discrimination against groups or people, or promote inequality. These issues depend on how humans make use of AI, not the technology itself.

Commercial and technical

On the right of the diagram are issues intrinsic to machine learning and AI technologies. Like any software, AI can fail, or have costs that exceed the value gained. Machine learning is critically dependent on the data used in training, and can give unpredictable or simply incorrect results when conditions change. The way AI is set up makes bias common, and hard to eliminate, especially if the training data is itself biased.

AI systems are essentially computer programs that make predictions based on training data. As a result, they can be fragile and inflexible, and may need adaptation or re-training in future. Inexplicability is a problem with modern AI techniques like deep learning, where we have no idea how the AI represents meaning internally, and we can only describe the decisions made in abstract mathematical terms. This is not much help, for example to justify why an AI system took a particular action.

We must pay close attention to these issues with AI. The good news is that the right technology and design processes can solve them in many cases.

How you can make best use of AI

The concerns highlighted here apply also to software and other technologies. They are generally well understood, and engineering offers tools and methodologies to manage them. From this insight, we summarise 6 principles for ethical AI in the diagram below.

These principles are especially important AI where AI could affect safety, in applications like control of machinery, vehicles, or healthcare, or in critical financial or business processes.

When developing safety-critical or medical devices, we are careful to analyse how the system might fail, what the effects might be, and to test them in practice. From this, we model the key risks, and take steps where necessary to reduce them. We use an appropriate quality system, document the design and validation process, and retain this information for the life of the product.

Next steps

We have set out here some important considerations and processes you should consider to assess the ethics of AI. The references provided below give further details in several areas we discussed.

It’s important to note that this summary is not exhaustive, and requirements vary from one application to another. Data scientists or developers on their own seldom have all of the skills needed to address the issues raised here. You will be legally responsible for the AI systems you develop, buy or put into production, so you should take expert advice.

Granta Innovation has practical experience gained from developing AI and software systems in a wide range of applications, and brings together a team with all the necessary expertise to address the challenges highlighted here. Get in touch if we could help.

By following these steps, you can ensure that you deliver genuine benefit with AI.

Links for further information

- Granta Innovation, What is AI, or what’s intelligent about machine learning?

- Bill Vaughan, 1969, “To err is human; to really foul things up requires a computer”

- House of Lords Select Committee on Artificial Intelligence, 2018, AI in the UK: ready, willing and able?

- Nick Bostrom, Future of Humanity Institute, and Eliezer Yudkowsky, Machine Intelligence Research Institute, 2011, The Ethics of Artificial Intelligence

- Graphcore, Accelerating machine learning for a world of intelligent machines

- Google, Cloud AutoML – Custom Machine Learning Models

- Darktrace, The Enterprise Immune System

- ISO 14971:2007, Medical devices — Application of risk management to medical devices

Author: Dr Antony Rix

Copyright © Granta Innovation Ltd, 2018-2020. All rights reserved.