… or what’s intelligent about machine learning?

Artificial intelligence (AI) is already having a significant impact, and has tremendous potential to make our lives better still. Yet it is a broad term and is often misunderstood as science fiction rather than software – which is why practitioners often prefer to use the term Machine Learning to describe their work.

This article addresses what it means through the following questions.

- What is AI?

- What is Machine Learning?

- What is Deep Learning?

- What else is AI?

- What next?

We’ll highlight how AI delivers value to people and businesses – and summarise some key concepts, limitations and strengths. Over the coming months we’ll explore these areas in more detail, and discuss the ethics of AI (Is AI any good?) and how to apply AI to your company.

If you want to research the applications, history or technical details of AI, the references at the end make a good start.

Granta Innovation helps businesses understand and implement the benefits that AI bring. Get in touch with us to receive further information about Granta Innovation, AI, IoT and related topics.

What is AI or Artificial Intelligence?

AI is a general term for techniques that allow computers to work with information to make predictions, and from this to make decisions. It encompasses a very wide range of overlapping methods and uses illustrated in the diagram below, and many more.

AI is exciting because it works. Thanks to advances in computing power, software algorithms, and clever crowdsourcing to increase the amount of data available, in the last few years AI systems have become better than humans at tasks from playing games to recognising handwriting.

While much recent development has applied AI behind the scenes on computers and servers, AI is now used to enhance images on smartphones, and to control robots, machinery and cars. Some of the most dramatic and visible success is where AI is being applied to the interface with people – to recognise speech accurately, process an enormous variety of commands to simplify information search and interaction with institutions like banks, and answer questions to win a quiz show like Jeopardy against the best human opponents.

Those who develop AI are acutely aware of how far we are from “general” or “self-replicating” AI. Today’s systems are not intelligent in the human sense. They are machines running software that use data and mathematical algorithms to make the best guess about what to do next.

We can already glimpse the practical future of AI in how it is being used today to:

- allow anyone to interact with things and with organisations, easily and quickly

- automate routine tasks from financial systems to legal work and production lines

- make cars safer and progressively allow them to drive themselves

- build robots that can navigate challenging environments and undertake more human tasks

- attack – and defend – systems, like computer networks

- recognise speech, text, images and people

- process and translate language

- synthesise speech, music and even art.

Of course, systems like these will affect people, just as automation, communication and computers have changed the world over the last two centuries. We will consider what this means in our next article, on the ethical implications of AI.

What is Machine Learning (ML)?

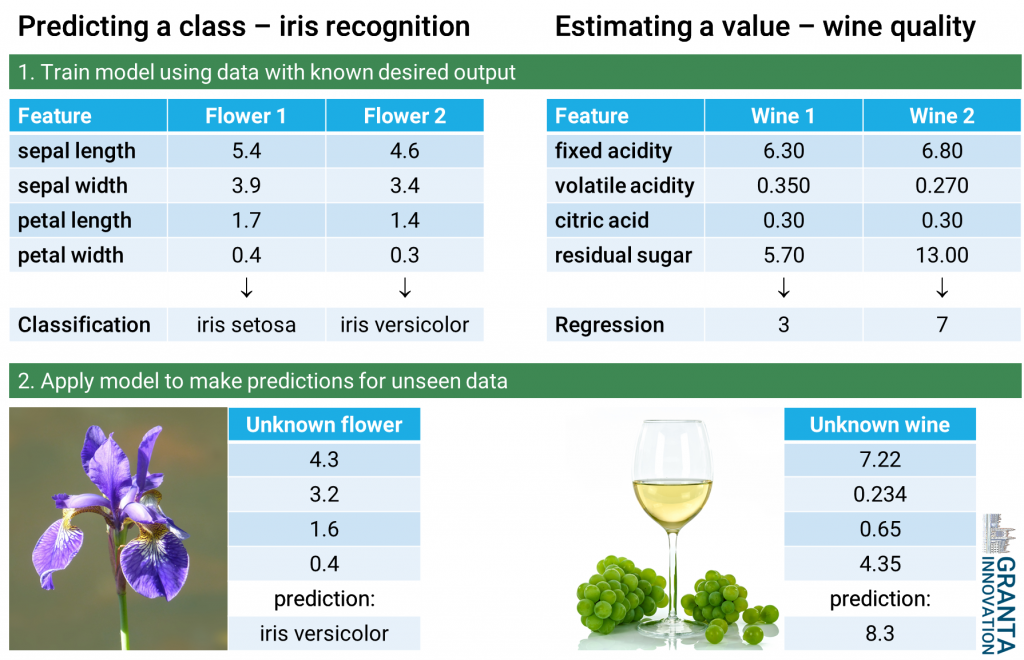

The biggest area of AI research today seeks to enable computers to make inferences from complex data. Techniques to do this are termed machine learning. Two examples below illustrate classification (of iris flowers) and regression (estimating the quality of wine from scientific measurements).

Common to these methods is that data is represented by numerical features. Machine learning creates a model of these features. For the two examples above, we start with some training data points where the desired output is known, and can use this to teach the model (by updating its internal weights) to predict these wanted values. Once trained, the model can then be used to make predictions with unseen data. This is known as supervised learning.

However, there are many scenarios where outputs are not available, but machine learning can still be used to identify patterns in data. Models can be trained to pick out distinct, independent features, for example different types of sound, or classify data into similar groups. Other approaches teach models to predict future values in a series using historical data, for example to learn how share prices change. These processes are collectively termed unsupervised learning.

Reinforcement learning is a way of getting ML to teach itself to solve problems by training to maximise a reward like a points score, and was impressively used by Deepmind to learn how to play computer games, and then the board game, Go.

Model structures and training processes vary widely. Especially when data is scarce, decades-old regression methods can often give good solutions. More recent approaches include decision forests, gradient boosted decision trees, support vector machines, neural networks, belief networks, convolutional networks and recurrent networks.

What is Deep Learning?

Some of the most significant breakthroughs in recent years relate to a set of techniques known as deep learning. These have extended machine learning to tackle hard, and very large, problems.

Most applications of deep learning work with neural networks.

Neural networks are inspired by connections in the human brain, but have a simple mathematical model illustrated in the diagram below. This neural network could be applied to the regression example above. It has two hidden layers of 4 nodes, or neurons, four inputs and one output. Each neuron takes a weighted sum of the results of the previous stage plus an offset weight, and passes the single value this gives through a mathematical mapping known as the activation function. This structure is well suited to modelling complex patterns in data.

While neural networks have been known since 1949, they have a problem. As the number of neurons and layers grows, the number of free parameters (weights) increases rapidly. This makes them hard to train in practice, leading to poor fits and overfitting.

Innovations by Geoffrey Hinton and others from 2006 onwards found ways to address these issues and successfully train very large models using lots of data and computing power. These enabled a breakthrough in performance, cutting error rates of 10-50% to less than 5% in benchmark tasks in speech and digit recognition. With these innovations, neural networks become deep.

Modern speech and image recognition systems, and natural language processing, rely heavily on deep learning applied to convolutional or recurrent neural networks.

Overfitting remains a key issue with neural and deep networks, even with significant recent improvements, as we shall see. What this means is that the predictor becomes very well matched to the data (or the type of data) used in its training, but doesn’t generalise well, and in the worst case behaves very unpredictably, when presented with realistic but different data. This is why it’s important to test any AI system thoroughly, with unseen data and real-world scenarios.

What else is AI?

First, we should highlight some things that AI is not.

- AI is nothing approaching a sentient being or lifeform in its own right

- It’s seldom the full solution to a problem on its own – much more is required to provide input data in the right form, and make use of the outputs

- AI isn’t perfect, often requiring much data to make useful predictions, and is subject to bias and other limitations that often occur in training data

- While AI is increasingly easy to use, these and other issues mean that care should always be taken to make sure its limitations and risks are understood and it is used to best effect.

AI and ML are large and rapidly-developing fields. While it’s impossible to capture their full potential in a short article, we wanted to explore two areas where AI is making exciting progress.

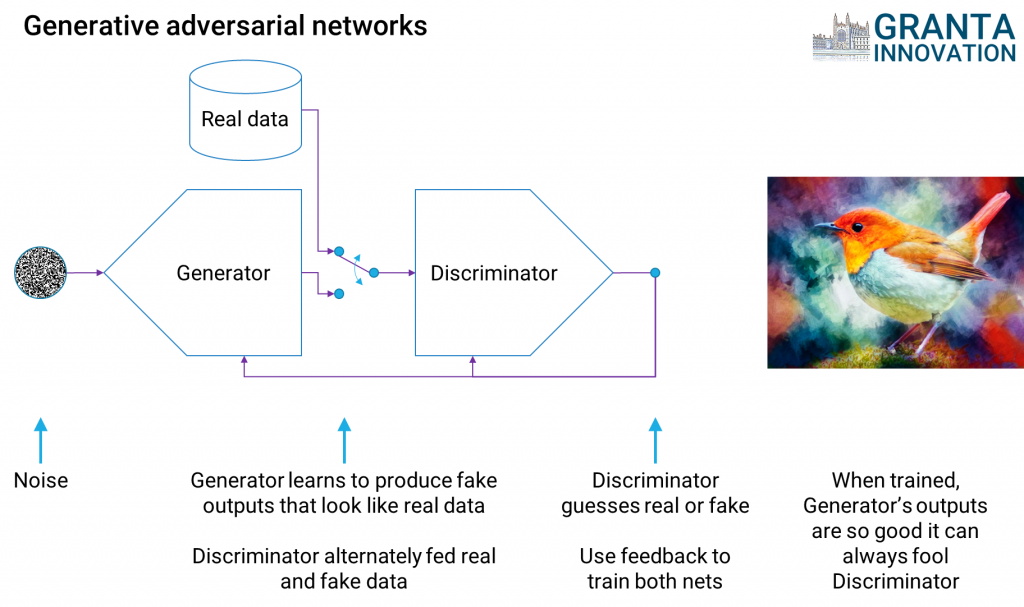

Generative adversarial networks (GANs) – a tool for creativity

We described above how deep neural networks can be trained to make predictions. In 2014 Ian Goodfellow, Yoshua Bengio and collaborators introduced a powerful new idea: train one network to fool another. This can be used for nefarious purposes – for example, to make almost imperceptible changes to pictures to fool image recognisers. But it also turns out to be a great way to improve the training of deep networks, and generate an endless stream of plausible-looking but artificial data like the synthetic bird concept below.

The future of computer interfaces

The future of computer interfaces

When did you last interact with an institution like a bank, struggled to do what you wanted, and ended up wasting a lot of time before speaking to an operator? AI can help, using chatbots.

Most of us have experienced these through Amazon Alexa, Apple Siri, or Google Assistant, and you may have seen IBM Watson in action. Interfaces like this work well both through speech recognition, thanks to deep learning, and through text interfaces on web and mobile applications, Facebook, Twitter and Weibo.

It’s not just the Internet giants who are building this technology: large traditional businesses and startups are developing chatbots, including our friends at Agilis AI, whose assistant Archie is pictured here.

Microsoft’s Cortana technologies have been used to create a particularly compelling chatbot with a personality and understanding of both the user and the context of conversation – Microsoft Xiaobing or Xiaoice (微软小冰). While this is so far focused on the Chinese market, expect more of this to come to you soon.

What next?

For consumers and individuals, AI is already here. Use it to have fun, learn more easily, and free up time to do what you enjoy. If you’re thinking about your studies, maths, engineering and computer science are key to gaining an edge in AI. There are excellent resources and openings now if you want to develop AI yourself. If you want to play with it and see its potential, use AI on your smartphone or buy an Alexa device, and be sure to work through the privacy settings to be comfortable with what you share.

For employees, AI is a concern and an opportunity. AI will change the way we work, just as machines automated manual processes, computers became central to office functions, and Internet search made information accessible. Clearly the impact on some jobs will be greater than others. Tree surgeons and medical workers are not going to be replaced by a computer any time soon; healthcare is one area that could benefit hugely from AI and the resources it can free up.

Knowledge workers will be affected more, especially where tasks are routine and rules-based. If you’re in the latter category, expect to use AI to make you more productive, and look to use it to improve your work/life balance. And AI will create entirely new jobs – could you be a self-driving car developer, or AI trainer?

For businesses and organisations, AI is bringing enormous change. Embrace its advantages to get ahead of competitors. You need to understand what AI you are using already, what data you have or can get, and the business processes, market opportunities, products or services that benefit most. Plan and set resources to build on this. Get in touch with Granta Innovation if we might be able to help you navigate this.

Links for further information

- Granta Innovation, 2018, The ethics of AI: is artificial intelligence any good?

- Agilis AI, 2018, Archie – Your Ai Assistant

- Jo Best, TechRepublic, 2013, IBM Watson: The inside story of how the Jeopardy-winning supercomputer was born, and what it wants to do next

- Cambridge Consultants, 2018, AI: Understanding and harnessing the potential

- Frank Chen, Andreesen Horowitz. , 2017, A16Z Playbook

- Deepmind, 2017, The story of AlphaGo so far

- Granta Innovation, 2018, The future of technology

- Ian J. Goodfellow, Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, David Warde-Farley, Sherjil Ozair, Aaron Courville, Yoshua Bengio, Université de Montréal, 2014, Generative Adversarial Networks

- David Kelnar, MMC Ventures, The state of AI 2017: Inflection Point

- House of Lords Select Committee on Artificial Intelligence, 2018, AI in the UK: ready, willing and able?

- Microsoft, 2017, Xiaoice (in Chinese)

- Udemy, 2017, Artificial Intelligence: Learn How to Build An AI

- Haohan Wang, Bhiksha Raj, Carnegie Mellon University, 2017, On the Origin of Deep Learning

Data sources for machine learning examples

- Dua, D. and Karra Taniskidou, E., 2017, University of California, Irvine, UCI Machine Learning Repository: Iris dataset

- Dua, D. and Karra Taniskidou, E., 2017, University of California, Irvine, UCI Machine Learning Repository: Wine quality dataset

Author: Dr Antony Rix

Copyright © Granta Innovation Ltd, 2018-2019. All rights reserved.